Instructions to use FreedomIntelligence/Apollo-2B-GGUF with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- llama-cpp-python

How to use FreedomIntelligence/Apollo-2B-GGUF with llama-cpp-python:

# !pip install llama-cpp-python from llama_cpp import Llama llm = Llama.from_pretrained( repo_id="FreedomIntelligence/Apollo-2B-GGUF", filename="Apollo-2B-q8_0.gguf", )

output = llm( "Once upon a time,", max_tokens=512, echo=True ) print(output)

- Notebooks

- Google Colab

- Kaggle

- Local Apps

- llama.cpp

How to use FreedomIntelligence/Apollo-2B-GGUF with llama.cpp:

Install from brew

brew install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf FreedomIntelligence/Apollo-2B-GGUF:Q8_0 # Run inference directly in the terminal: llama-cli -hf FreedomIntelligence/Apollo-2B-GGUF:Q8_0

Install from WinGet (Windows)

winget install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf FreedomIntelligence/Apollo-2B-GGUF:Q8_0 # Run inference directly in the terminal: llama-cli -hf FreedomIntelligence/Apollo-2B-GGUF:Q8_0

Use pre-built binary

# Download pre-built binary from: # https://github.com/ggerganov/llama.cpp/releases # Start a local OpenAI-compatible server with a web UI: ./llama-server -hf FreedomIntelligence/Apollo-2B-GGUF:Q8_0 # Run inference directly in the terminal: ./llama-cli -hf FreedomIntelligence/Apollo-2B-GGUF:Q8_0

Build from source code

git clone https://github.com/ggerganov/llama.cpp.git cd llama.cpp cmake -B build cmake --build build -j --target llama-server llama-cli # Start a local OpenAI-compatible server with a web UI: ./build/bin/llama-server -hf FreedomIntelligence/Apollo-2B-GGUF:Q8_0 # Run inference directly in the terminal: ./build/bin/llama-cli -hf FreedomIntelligence/Apollo-2B-GGUF:Q8_0

Use Docker

docker model run hf.co/FreedomIntelligence/Apollo-2B-GGUF:Q8_0

- LM Studio

- Jan

- vLLM

How to use FreedomIntelligence/Apollo-2B-GGUF with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "FreedomIntelligence/Apollo-2B-GGUF" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "FreedomIntelligence/Apollo-2B-GGUF", "prompt": "Once upon a time,", "max_tokens": 512, "temperature": 0.5 }'Use Docker

docker model run hf.co/FreedomIntelligence/Apollo-2B-GGUF:Q8_0

- Ollama

How to use FreedomIntelligence/Apollo-2B-GGUF with Ollama:

ollama run hf.co/FreedomIntelligence/Apollo-2B-GGUF:Q8_0

- Unsloth Studio new

How to use FreedomIntelligence/Apollo-2B-GGUF with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for FreedomIntelligence/Apollo-2B-GGUF to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for FreedomIntelligence/Apollo-2B-GGUF to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for FreedomIntelligence/Apollo-2B-GGUF to start chatting

- Docker Model Runner

How to use FreedomIntelligence/Apollo-2B-GGUF with Docker Model Runner:

docker model run hf.co/FreedomIntelligence/Apollo-2B-GGUF:Q8_0

- Lemonade

How to use FreedomIntelligence/Apollo-2B-GGUF with Lemonade:

Pull the model

# Download Lemonade from https://lemonade-server.ai/ lemonade pull FreedomIntelligence/Apollo-2B-GGUF:Q8_0

Run and chat with the model

lemonade run user.Apollo-2B-GGUF-Q8_0

List all available models

lemonade list

Multilingual Medicine: Model, Dataset, Benchmark, Code

Covering English, Chinese, French, Hindi, Spanish, Hindi, Arabic So far

👨🏻💻Github •📃 Paper • 🌐 Demo • 🤗 ApolloCorpus • 🤗 XMedBench

中文 | English

🌈 Update

- [2024.03.07] Paper released.

- [2024.02.12] ApolloCorpus and XMedBench is published!🎉

- [2024.01.23] Apollo repo is published!🎉

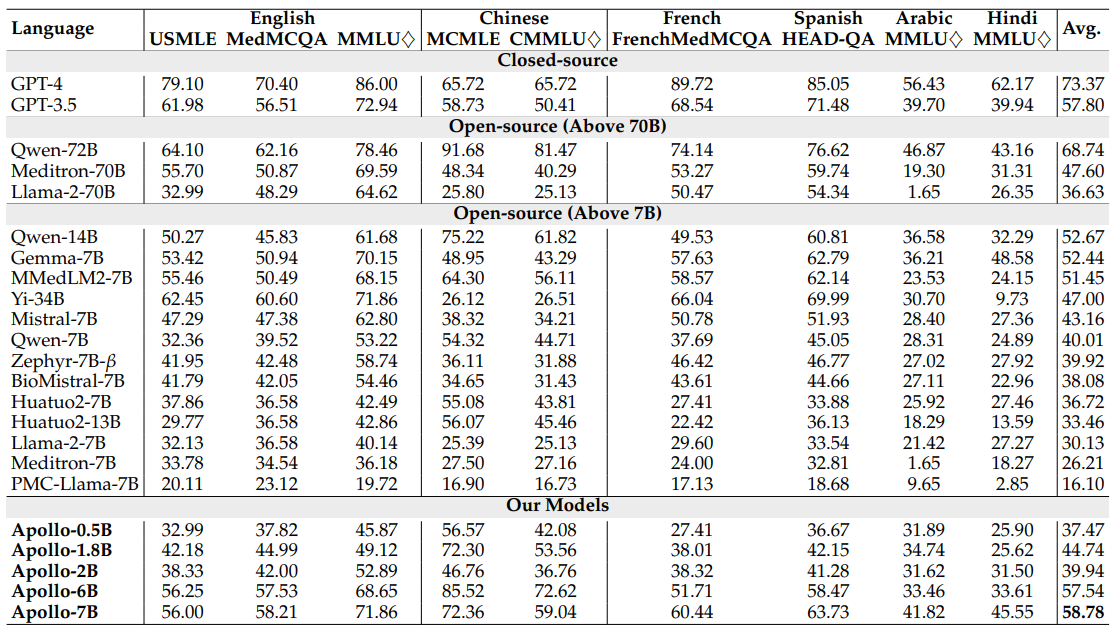

Results

Apollo-0.5B • 🤗 Apollo-1.8B • 🤗 Apollo-2B • 🤗 Apollo-6B • 🤗 Apollo-7B

Dataset & Evaluation

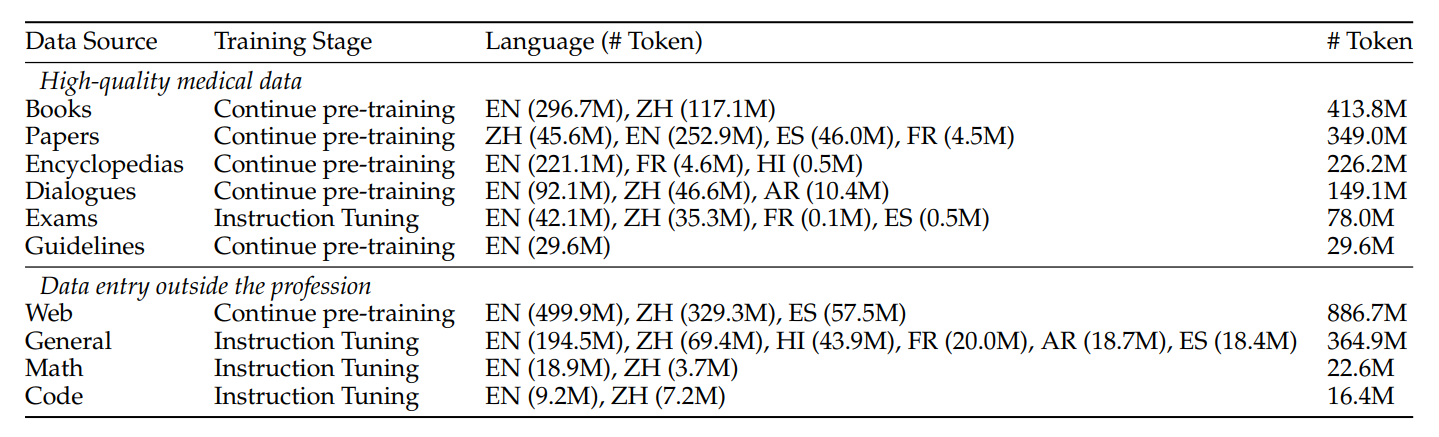

Dataset 🤗 ApolloCorpus

Click to expand

- Zip File

- Data category

- Pretrain:

- data item:

- json_name: {data_source}{language}{data_type}.json

- data_type: medicalBook, medicalGuideline, medicalPaper, medicalWeb(from online forum), medicalWiki

- language: en(English), zh(chinese), es(spanish), fr(french), hi(Hindi)

- data_type: qa(generated qa from text)

- data_type==text: list of string

[ "string1", "string2", ... ] - data_type==qa: list of qa pairs(list of string)

[ [ "q1", "a1", "q2", "a2", ... ], ... ]

- data item:

- SFT:

- json_name: {data_source}_{language}.json

- data_type: code, general, math, medicalExam, medicalPatient

- data item: list of qa pairs(list of string)

[ [ "q1", "a1", "q2", "a2", ... ], ... ]

- Pretrain:

Evaluation 🤗 XMedBench

Click to expand

EN:

- MedQA-USMLE

- MedMCQA

- PubMedQA: Because the results fluctuated too much, they were not used in the paper.

- MMLU-Medical

- Clinical knowledge, Medical genetics, Anatomy, Professional medicine, College biology, College medicine

ZH:

- MedQA-MCMLE

- CMB-single: Not used in the paper

- Randomly sample 2,000 multiple-choice questions with single answer.

- CMMLU-Medical

- Anatomy, Clinical_knowledge, College_medicine, Genetics, Nutrition, Traditional_chinese_medicine, Virology

- CExam: Not used in the paper

- Randomly sample 2,000 multiple-choice questions

ES: Head_qa

FR: Frenchmedmcqa

HI: MMLU_HI

- Clinical knowledge, Medical genetics, Anatomy, Professional medicine, College biology, College medicine

AR: MMLU_Ara

- Clinical knowledge, Medical genetics, Anatomy, Professional medicine, College biology, College medicine

Results reproduction

Click to expand

Waiting for Update

Citation

Please use the following citation if you intend to use our dataset for training or evaluation:

@misc{wang2024apollo,

title={Apollo: Lightweight Multilingual Medical LLMs towards Democratizing Medical AI to 6B People},

author={Xidong Wang and Nuo Chen and Junyin Chen and Yan Hu and Yidong Wang and Xiangbo Wu and Anningzhe Gao and Xiang Wan and Haizhou Li and Benyou Wang},

year={2024},

eprint={2403.03640},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

- Downloads last month

- 7

8-bit